There is a question that comes up constantly when brands start paying attention to their AI visibility: "Why is ChatGPT saying something about us that isn't true anymore?"

Or the flip side: "Why doesn't Perplexity know about the product we launched three months ago?"

If you’ve spent any time looking at your brand’s "ModelScore" or checking citations, you’ve probably noticed the discrepancy. You look great on Google, but in the AI world, you’re either invisible or stuck in 2022.

The answer is data freshness: but not in the way most SEOs think about it. The rules that govern how current your brand appears in AI answers are fundamentally different from the rules that govern Google rankings. Confusing the two leads to the wrong strategy, wasted budget, and a lot of frustration.

How Organic Search Handles Freshness (The Old Playbook)

Google's freshness model is well understood. It’s a linear pipeline. Googlebot crawls your pages on a schedule, indexes the content, and the search results reflect what was on your site when it was last crawled.

For most sites, that cycle happens within a few days to a few weeks. If you change your title tag today, it will likely show up in search results within a week. If you publish a new page and get it indexed, it can rank within days if the authority signals are right.

The freshness signal in organic search is essentially a timestamp. Google knows when it crawled your content, how often the content changes, and it weights recency accordingly for queries where freshness matters: like breaking news, financial data, or current events.

The mental model is simple: Crawl, Index, Rank. The pipeline is continuous and the lag is measured in days.

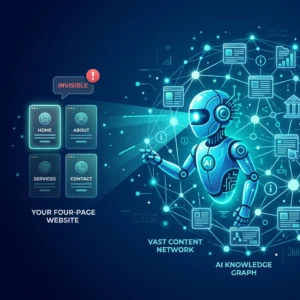

Visual: A clean comparison between the "Crawl, Index, Rank" linear flow of Google versus the multi-layered "Training vs. Retrieval" flow of AI models.

How AI Systems Handle Freshness : It’s Two Different Problems

AI assistants have a freshness problem that’s actually two completely separate problems layered on top of each other. Treating them as one is the source of most bad AI visibility advice.

Problem 1: Training Data Cutoff

Every large language model (LLM) was trained on a massive snapshot of the internet that was frozen at a specific point in time. After that cutoff date, the model has no "innate" knowledge of anything that happened.

This isn't a crawl lag of days or weeks: it’s a gap of months to years. GPT-4’s training data has a cutoff. Claude’s has a cutoff. Gemini’s has a cutoff. When these models answer from their training weights alone, they are drawing on knowledge that may be 12 to 24 months old.

The implication for your brand: If your company went through a rebrand, launched a new product line, or changed your positioning in the last two years, many AI models literally don't know it. They aren’t giving you stale results on purpose: they just don't have the "memories" of your recent success.

Problem 2: Retrieval Freshness (RAG)

Some AI platforms don’t just answer from their memory. They search the web first, retrieve current documents, and then generate a response grounded in what they found. This is called Retrieval-Augmented Generation, or RAG.

For these platforms, the freshness model is much closer to organic search. If your content is indexed and retrievable, the AI can cite it today.

These two problems require completely different solutions. Optimizing for training data visibility is a long game measured in quarters. Optimizing for retrieval visibility is measured in days and weeks. If you mix them up, you’ll end up with a strategy that works for neither.

The Three Architectures : And Why They Matter for Your Brand

Not all AI platforms work the same way. Understanding which architecture each platform uses tells you everything about how to think about freshness.

1. RAG-First Platforms (The "Live" Searchers)

Perplexity is the clearest example here. Every query triggers a live web search. The model retrieves current documents, grounds its response in those documents, and the citations it shows you are the actual sources that shaped the answer.

Google AI Overviews (AIO) work similarly. The systems powering AIO retrieve current web content to generate each answer. Your Google Search Console data and your traditional SEO levers still apply here because the underlying model is retrieval-based.

The Strategy: Keep content fresh, ensure it's indexed, and make it clearly authoritative. If you aren't showing up here, it's likely a technical SEO or content relevance issue.

2. Hybrid Platforms (The "Thinkers")

ChatGPT (GPT-4 and later) and Microsoft Copilot operate in a hybrid mode. When a user asks a question, the model decides whether or not it needs to search the web.

If it searches, the citations are "causal": the retrieved content actually shaped the response. If it doesn't search, the response comes from training data, and any sources it mentions are often "post-hoc rationalizations" (the model trying to justify what it already "knew").

The Strategy: You need to address both layers. Your content must be retrievable for real-time cases, but you also need to focus on authoritative third-party sources (like major publications) that influence the model's core training weights.

3. Training-Data-First Platforms (The "Knowledge" Bases)

Claude, in its base configuration, is a training-data-first platform. It doesn't browse the web unless given explicit tools. When Claude tells a user something about your brand, it’s drawing on its internal "brain" snapshot.

For these platforms, the concept of "freshness" is almost irrelevant in the short term. What matters is whether your brand was well-represented in the data the model was trained on originally.

Graphic: A modern, minimalist chart showing the Three Architectures: RAG-First (Perplexity), Hybrid (ChatGPT/Copilot), and Training-Data-First (Claude).

How CiteMetrix Approaches This

Because these platforms have such different data architectures, CiteMetrix tracks them separately rather than treating AI visibility as a single, vague number.

When we measure whether your brand is cited by Perplexity versus whether it’s cited by Claude, we’re measuring fundamentally different things:

- A Perplexity citation means your content surfaced in a real-time retrieval query. This is actionable today. If you're missing, we look at content optimization and freshness.

- A Claude citation means your brand has deep roots in the training data. This is a long-term signal. To move the needle here, you need depth and authority across the sources AI companies crawl for training: like Wikipedia, major news outlets, and industry-leading blogs.

This distinction is also vital for accuracy monitoring. When CiteMetrix detects a hallucination: something an AI is saying about your brand that isn't true: the platform it’s occurring on tells us why it’s happening.

A hallucination on Perplexity is usually a retrieval problem (it found bad info). A hallucination on Claude is a training problem (the "brain" learned the wrong thing a year ago). Each requires a different remediation strategy.

Screenshot: A cropped, clean view of the CiteMetrix dashboard showing the breakdown of citations across different AI models.

Your AI Visibility Action Plan

So, what should you actually do? Here is how to split your focus:

For Retrieval-First (Perplexity, Google AIO)

Treat this like modern SEO. Keep your brand content current and well-structured. Ensure your most important facts: pricing, product specs, and positioning: are clearly stated on pages that are indexed. If you want to see how you're currently performing, you can check your ModelScore.

For Hybrid Platforms (ChatGPT, Copilot, Gemini)

Run a two-track strategy.

- Short-term: Optimize for retrieval (same as above).

- Long-term: Build a broad, authoritative presence. Get mentioned in major publications and maintain accurate third-party coverage. This influences what the model learns during its next big training "re-fill."

For Training-Data-First (Claude, Mistral)

Stop obsessing over your blog post from three days ago. Instead, invest in the sources that feed training data. Think about the next training cycle. Earn coverage in the databases and publications that are known to be included in major training corpora.

The Bottom Line

The brand visibility game has changed. It’s no longer just about being "first" on a list of links; it’s about being the "answer" in a conversational interface.

Google frames brands as competitors (showing you a list to choose from). AI frames brands as solutions (telling you which one is best). If you aren't in the answer, you don't exist to that user.

Visual: A "Two-Track Strategy" diagram showing short-term retrieval tactics (Technical SEO/Freshness) vs. long-term training tactics (PR/Authority).

The rules are still being written, and the architectures are evolving. Perplexity today uses retrieval; Perplexity in two years might have a more sophisticated hybrid model.

The brands that win won’t be the ones who "hack" the system for a week. They’ll be the ones who track their visibility consistently enough to notice when the rules change.

Ready to see what the AI "knows" about you?

Join the beta (free) → citemetrix.com or book a demo to see how your brand stacks up across the different AI architectures.