Understanding Your ModelScore

How CiteMetrix calculates the single number that summarizes your AI visibility — and how to move it.

ModelScore is a single 0-100 number that summarizes how well AI platforms know, describe, and direct attention to your brand. It’s the metric we put on the front of every dashboard because it’s the question every operator actually wants answered: “Are we visible to AI, and is it getting better or worse?”

This article explains what’s inside that number, how it’s calculated, and how to read its movement.

What ModelScore actually measures

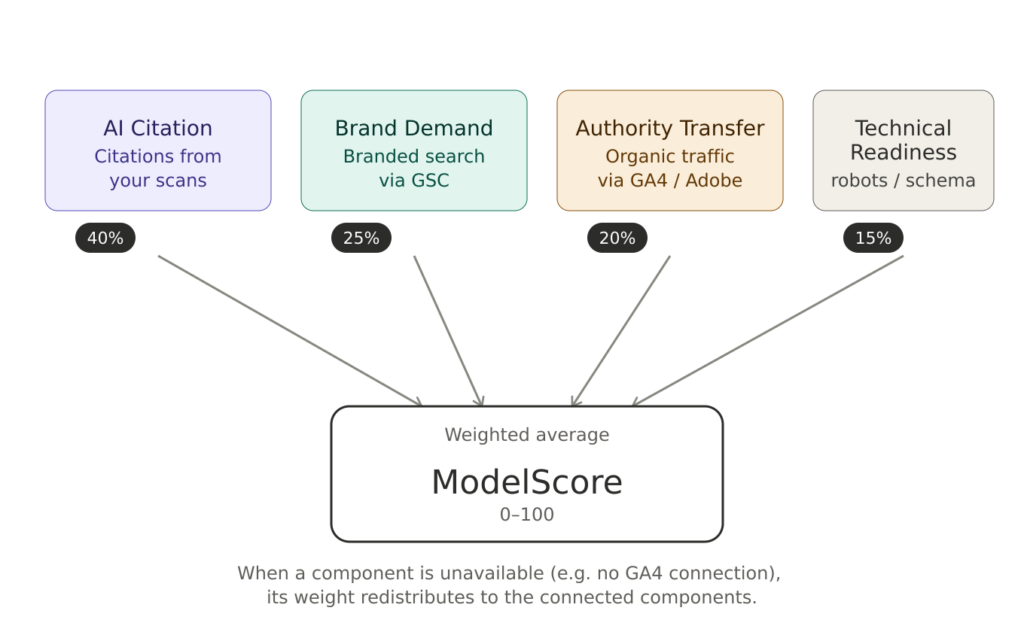

ModelScore is a weighted average of four sub-scores, each measuring a different facet of “AI visibility”:

| Component | Weight | Measures |

|---|---|---|

| AI Citation Score | 40% | How often AI platforms cite your brand when asked relevant questions, plus the sentiment and platform diversity of those citations |

| Brand Demand | 25% | Branded search volume in Google Search Console — proxy for “do people search for your brand by name” |

| Authority Transfer | 20% | Quality of organic traffic from AI/search referrals, sourced from Google Analytics 4 or Adobe Analytics |

| Technical Readiness | 15% | Whether your site is set up so AI crawlers can read and cite you (robots.txt, llms.txt, schema markup, structured content) |

The 40-25-20-15 weighting reflects relative impact: citations are the most direct signal of AI visibility (and the only one CiteMetrix can move on its own), so they carry the most weight. Technical readiness is foundational but quick to fix once configured, so it carries the least.

What happens when a component isn’t connected

Three of the four components require external integrations to compute:

- Brand Demand needs Google Search Console (OAuth)

- Authority Transfer needs Google Analytics 4 (OAuth) or Adobe Analytics (BYOK)

- Technical Readiness is fully automatic — it crawls your site directly

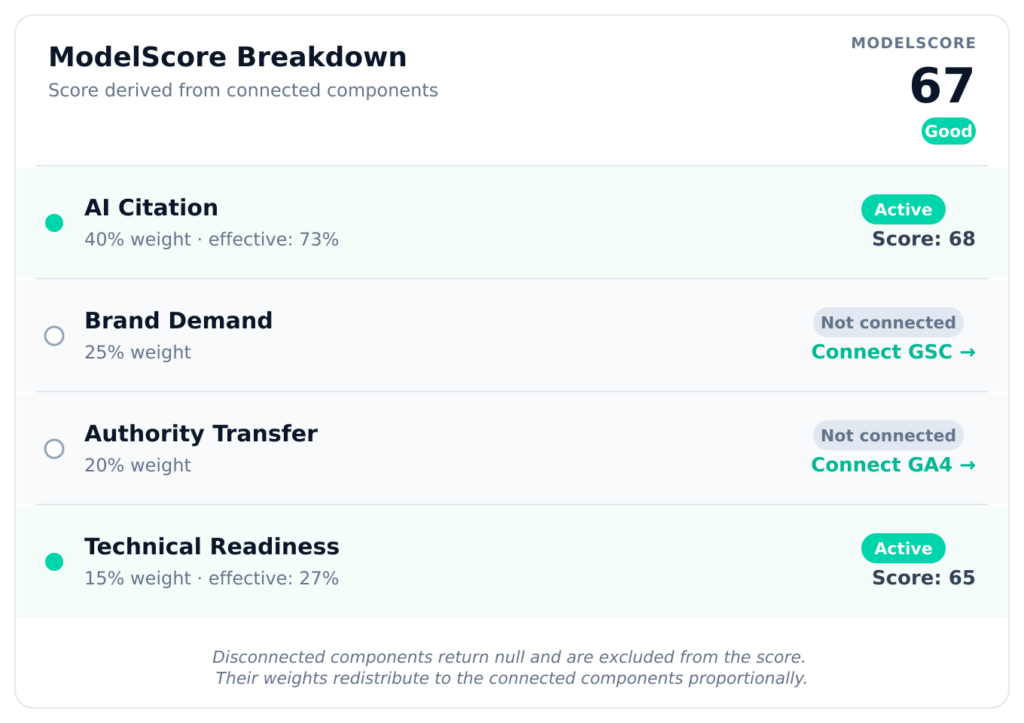

When a required integration isn’t connected, that component returns null (not zero) and is excluded from the score entirely. The remaining components’ weights redistribute proportionally. So if you’ve connected only AI keys (no GSC, no GA4), your ModelScore is computed from AI Citations and Technical Readiness only — at an effective 40/15 = 73% / 27% split, not 40% / 15% with two big zeros pulling you down.

This matters because it means the score you see represents what CiteMetrix can actually measure, not a punishment for incomplete setup. The dashboard shows you which components are contributing and which are excluded:

We recommend connecting GSC, GA4 and/or Adobe Analytics even on Day 1 — not because you’ll be penalized otherwise, but because Brand Demand and Authority Transfer are the components that catch trends AI Citation alone can’t see. AI may know who you are and drive zero traffic to your site; you want to see that pattern.

The four components, in detail

AI Citation Score (40%)

Calculated from the last 30 days of citation scans. The formula:

- Base score (0-70 points): citation rate × 0.7. If 50% of your queries surfaced a citation, you get 35 points here. 100% citation rate caps at 70 points.

- Sentiment bonus (0-20 points): positive sentiment ratio across all citations × 20. If 80% of citations were positive, you get 16 bonus points.

- Platform coverage bonus (0-10 points): number of platforms that cited you × 3.33, capped at 10. Three or more platforms gets the full bonus.

A perfect 100 here means: AI cites you for nearly every relevant query, the citations are overwhelmingly positive, and you’re appearing across all major platforms. In practice, healthy brands sit in the 50-75 range.

To move this number: improve your content’s findability for queries you care about. Specifically — make sure clear answers exist on your site to the questions your customers ask. AI platforms favor content with explicit Q&A patterns, dated authoritative sources, and structured data. The Hallucinations & Remediation workflow points you at the specific pages that need attention.

Brand Demand (25%)

Pulled from Google Search Console. Measures the share of your search impressions that include a branded query (your domain name, your brand name, or close variants). Higher branded share = stronger top-of-funnel awareness, which correlates with AI citation likelihood (AI platforms weight content from sites that get organic searches for the brand itself).

The score is normalized against your total search volume, so it works for both small brands and large ones. A brand with 100k impressions where 30% are branded scores roughly the same as a brand with 10k impressions where 30% are branded.

To move this number: the levers are PR, brand campaigns, and content that gets people searching for you by name. CiteMetrix doesn’t directly affect this — it just tracks it.

Authority Transfer (20%)

Pulled from Google Analytics 4 (preferred) or Adobe Analytics. Measures the volume and quality of organic traffic landing on your site, with a heuristic that gives more weight to volume on a logarithmic scale (so going from 100 to 1,000 monthly organic visits is worth more than going from 10,000 to 11,000).

The “Transfer” in the name reflects the goal: AI platforms cite content that drives engaged readers — and your organic traffic data tells AI’s training and ranking pipelines that your site is worth citing. It’s a signal both directions.

To move this number: classic SEO. Strong content that ranks for non-branded queries, technical SEO basics, link acquisition. CiteMetrix tracks the result; it doesn’t generate the traffic.

Technical Readiness (15%)

The only fully automatic component. CiteMetrix fetches three things from your domain on each scan:

robots.txt— checks whether you’ve explicitly blocked any of the major AI crawlers: GPTBot, ChatGPT-User, CCBot, Google-Extended, anthropic-ai, PerplexityBot. Each one you allow is worth points; each block costs you. Many sites accidentally block these because their robots.txt was written before AI crawlers existed and a wildcardDisallow: /blocks them by default.llms.txt— an emerging convention (similar tositemap.xml) that tells AI platforms what your most important content is. Having one at all earns 20 points.- Homepage schema markup — checks for JSON-LD structured data and reasonable heading structure. Both contribute points.

The Technical Readiness panel in your dashboard shows each individual check pass/fail so you know exactly what to fix. Most issues take an hour or less to address and produce immediate score movement on the next scan.

Rating bands

CiteMetrix translates raw ModelScore values into named bands:

| Score | Rating | Reading |

|---|---|---|

| 80-100 | Excellent | AI cites you reliably, accurately, and across platforms. Maintain. |

| 60-79 | Good | Strong baseline. A few platforms or query types under-cite you; focus there. |

| 40-59 | Fair | Mixed picture. AI knows you, but coverage is uneven and accuracy is inconsistent. |

| 20-39 | Poor | AI either doesn’t know you well or describes you incorrectly. High-leverage problems to fix. |

| 0-19 | Critical | Effectively invisible to AI, or being misrepresented broadly. Treat as urgent. |

Most brands new to AI visibility tracking start in Fair or Poor. Movement of 5-10 points per month after focused remediation is typical. We’ve seen brands move from Poor to Good in a quarter when they take the hallucination workflow seriously.

Reading movement

A single ModelScore is a snapshot. The interesting question is the trend.

CiteMetrix stores a daily snapshot of your ModelScore plus each component, so you can see week-over-week, month-over-month, and quarter-over-quarter changes. The chart on your dashboard plots this over time.

Things to watch for:

- Sudden drops — usually a content change broke schema markup, a new robots.txt blocked an AI crawler, or a hallucination was introduced when a competitor or news cycle confused AI about your facts.

- Slow climbs — what success looks like. Citation scores grow as AI’s training data catches up with your content updates and as your brand demand grows.

- Flat scores — you’re not getting worse, but you’re also not benefiting from any of CiteMetrix’s recommendations. The hallucination remediation workflow is the fastest mover when scores plateau.

What ModelScore is not

A few things ModelScore deliberately does not measure, despite frequent requests:

- Total revenue or conversions from AI traffic — that’s GA4’s job. ModelScore measures the upstream signal (visibility), not the downstream outcome (revenue). The two correlate but aren’t the same.

- Sentiment alone — sentiment is one component of AI Citation Score, but a brand with 100% negative coverage and 100% positive coverage at the same citation rate score very differently. Sentiment matters; it’s just not the whole picture.

- Competitor scoring — ModelScore is yours alone. Competitor visibility is tracked separately in the Competition section, with its own metrics.

- A guarantee — AI platforms change their training data, prompt handling, and citation behavior constantly. ModelScore can shift even when you’ve changed nothing on your end. The trend is what to trust, not the daily reading.

Next steps

- If your score is in Fair or below, work through Hallucinations & Remediation first — it’s the fastest mover.

- If Brand Demand or Authority Transfer is showing as “not connected”, connect GSC and GA4 to fill in the picture.

- If Technical Readiness is below 50, follow the Technical Readiness checklist — usually a 30-minute fix.