Part of the series: The AI Visibility Gap

Imagine a salesperson who mentions your company to every prospect they meet. Sounds great, right?

Now imagine that every time they mention you, they follow it with "but you might want to consider some alternatives." Or "they're a solid option, though their customer support has been criticized." Or "they used to be the market leader, but newer competitors have caught up."

That salesperson is working against you while appearing to work for you. And that's exactly what's happening with AI platforms right now : for brands that are tracking citation rates but not sentiment.

Being mentioned is not the same as being recommended

There's a natural tendency to treat AI visibility as binary: either the AI mentions your brand or it doesn't. And citation rate is an important metric : you can't win if you're not in the conversation.

But there's a massive difference between being mentioned and being endorsed.

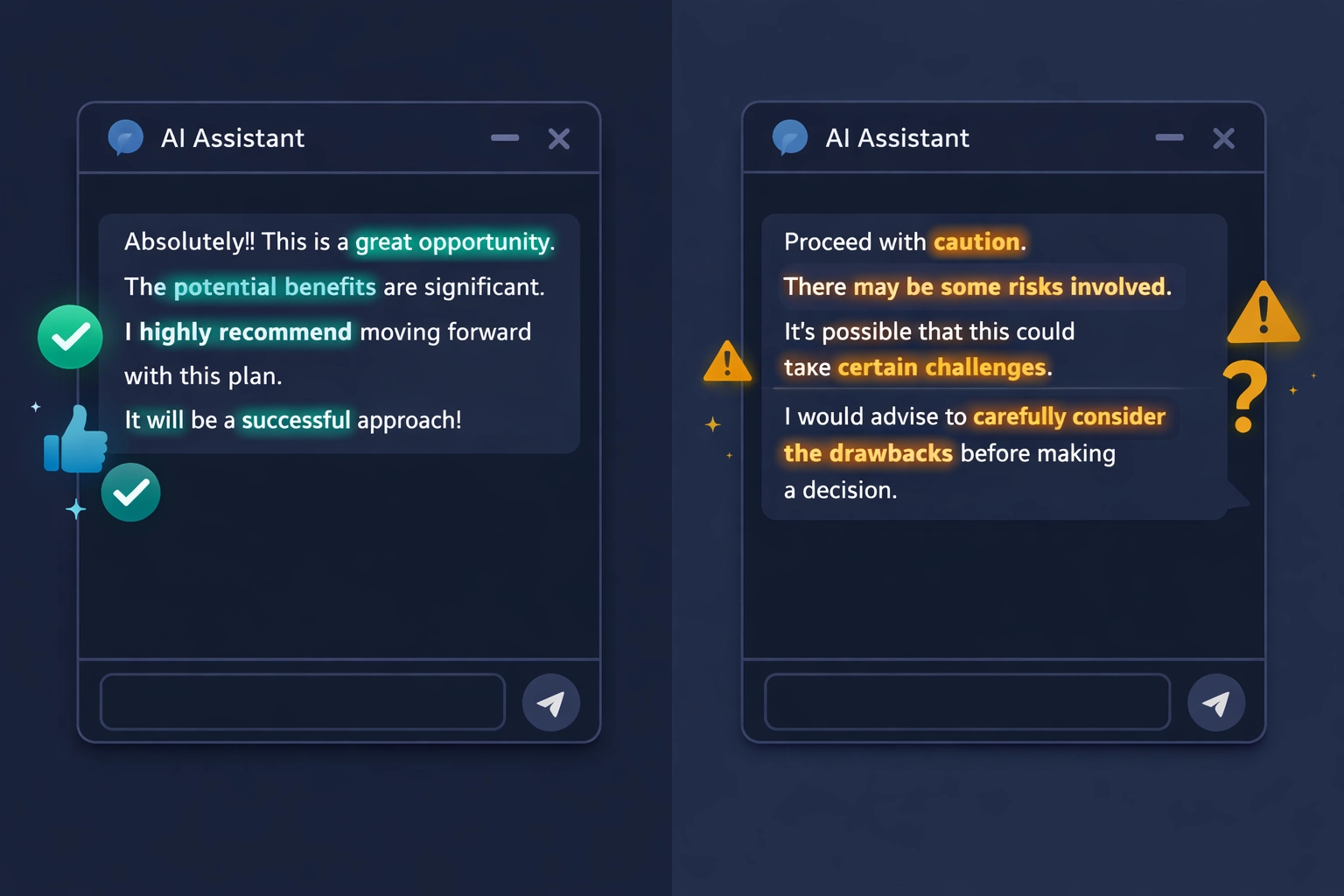

When someone asks Perplexity "What's the best CRM for small businesses?", the AI might list eight options. Your brand might be one of them. That's a citation. But the words surrounding that citation matter enormously:

"Salesforce is the industry standard with the most comprehensive feature set."

vs.

"Salesforce is a popular option, though many small businesses find it overly complex and expensive for their needs."

Both are citations. Both mention the brand. One is a recommendation. The other is a warning dressed up as a mention.

If you're only counting whether you're mentioned : without measuring how you're mentioned : you're looking at a map without contour lines. You can see where you are, but you can't see whether you're on a mountain or in a valley.

The sentiment spectrum is wider than you think

AI sentiment isn't just positive or negative. It operates on a spectrum that's more nuanced than most marketers expect:

Strong positive: The AI actively recommends your brand, uses phrases like "best for," "ideal choice," or "leading platform." This is the gold standard : the AI is functioning as an unpaid advocate.

Mild positive: You're mentioned favorably but not emphatically. "A good option" or "worth considering." Decent, but not the kind of language that closes deals.

Neutral: You're listed alongside competitors without any evaluative language. The AI treats you as interchangeable with alternatives. Not harmful, but not helpful either.

Qualified positive: The AI says something nice and then immediately undercuts it. "Known for its powerful features, though some users report a steep learning curve." This is the most dangerous category because it looks like a positive mention if you're not reading carefully.

Negative: The AI explicitly steers people away. "Has faced criticism for…" or "Competitors like X and Y offer better value." Rare for established brands, but devastating when it happens.

Most brands assume they're in the positive range because they have strong brand equity in the real world. But AI platforms don't know your brand the way your customers do. They know your brand the way the internet talks about your brand : and that's a very different thing.

Sentiment varies by platform : and that's a clue

Here's something that surprises most marketers when they first look at AI sentiment data: the same brand can have strongly positive sentiment on one platform and neutral or negative sentiment on another.

ChatGPT might describe your product enthusiastically. Claude might be more measured. Perplexity might cite a review article that happens to be critical. Google's AI Overviews might pull from a comparison page where you ranked third.

This variation isn't random. Each platform draws from different source material, weights different types of content, and has different tendencies in how it frames responses. Understanding where your sentiment is strong and where it's weak tells you something actionable about the information ecosystem around your brand.

If Perplexity consistently describes you more negatively than other platforms, it's likely because the web sources Perplexity is drawing from skew critical. That's a content problem you can fix : by creating or earning content that provides a more accurate, more favorable picture for those sources to reference.

If ChatGPT is enthusiastic but Claude is lukewarm, the difference might be in training data recency or the types of documents each model emphasizes. That's useful intelligence for your content strategy.

Sentiment isn't just a score : it's a diagnostic. It tells you where the perception problem lives and gives you a starting point for fixing it.

The compounding effect of AI sentiment

Here's what makes this urgent: AI sentiment compounds.

When an AI platform describes your brand with qualified praise or mild negativity, that framing gets reinforced every time a user sees it. Hundreds of people a day might receive the same lukewarm description of your company. Each one forms a slightly negative first impression. Some of them never visit your site. Some of them visit but arrive already skeptical. Some of them go to a competitor who got the unqualified recommendation.

Over weeks and months, that steady drip of tepid AI mentions erodes your brand's competitive position in ways that don't show up in any traditional metric. Your traffic might not decline. Your rankings might hold steady. But your conversion rate drifts downward because prospects are arriving pre-influenced by an AI that damned you with faint praise.

And because you're not measuring it, you attribute the decline to market conditions, competitive pressure, or seasonal factors : never realizing that an invisible channel has been softly undermining your brand perception at scale.

How to audit your AI sentiment right now

Open ChatGPT, Perplexity, and Claude. Ask each one these questions, substituting your brand:

- "What do you think of [your brand]?"

- "Would you recommend [your brand] for [your target use case]?"

- "[Your brand] vs. [top competitor]"

- "What are the pros and cons of [your brand]?"

- "What's the best [your category] tool?"

Don't just check whether you're mentioned. Read the language carefully. Look for qualifiers, hedging, "however" statements, and subtle steering toward competitors. Compare the tone of your mention against how the AI describes your top competitor. That comparison is often more revealing than your own mention in isolation.

Doing this manually doesn't scale

Sentiment is particularly hard to track manually because it changes gradually. You won't notice the shift from "highly recommended" to "a solid option" in a single check. It happens over model updates, over weeks, and it requires consistent measurement to catch the trajectory before it becomes a problem.

Several AI visibility platforms track sentiment systematically. A few to evaluate:

CiteMetrix (citemetrix.com) : Per-citation sentiment scoring across six AI platforms with trend tracking over time. Breaks down sentiment by platform so you can see where your perception is strong and where it's slipping. Includes remediation tools for improving the content that influences AI responses. Disclosure: this is my platform.

Profound (profound.com) : Sentiment analysis across 10+ AI models with enterprise reporting. Well-suited for larger organizations managing multiple brands.

Peec AI (peec.ai) : Tracks sentiment across five platforms with per-citation scoring. Good monitoring foundation without remediation features.

Scrunch AI (scrunch.ai) : Sentiment tracking across four platforms with a focus on competitive intelligence. Geared toward agencies and brand teams.

Whatever approach you take, the key is to track sentiment as a trend, not a snapshot. A single check tells you where you stand today. A trend line tells you whether the AI's perception of your brand is improving, holding steady, or quietly deteriorating.

Your best salesperson might be your worst

If AI platforms are increasingly functioning as a recommendation engine : and every usage trend says they are : then sentiment is the metric that determines whether that engine is working for you or against you.

You can have strong brand awareness in AI responses and still lose deals because the quality of those mentions is working against you. Citation rate is your reach. Sentiment is your conversion. You need both.

The brands that monitor sentiment early will be the first to spot and fix the narratives that are costing them customers they never knew they lost.

Ready to see what AI really says about your brand? Get your ModelScore and start tracking your AI sentiment across platforms → citemetrix.com

Eric Richmond is the founder of Expert SEO Consulting and has spent 20+ years helping brands navigate changes in how people find information online. He writes about the intersection of AI, search, and brand visibility.